Michigan Student Artificial Intelligence Lab

A student organization focused on exploring cutting-edge artificial intelligence education, application and research.

A student organization focused on exploring cutting-edge artificial intelligence education, application and research.

MSAIL is a student organization devoted to artificial intelligence research. We strive to spread our passion for artificial intelligence throughout the University of Michigan student body, regardless of demographic or academic standing. As of Winter 2024, our main operations are education, projects, and reading groups.

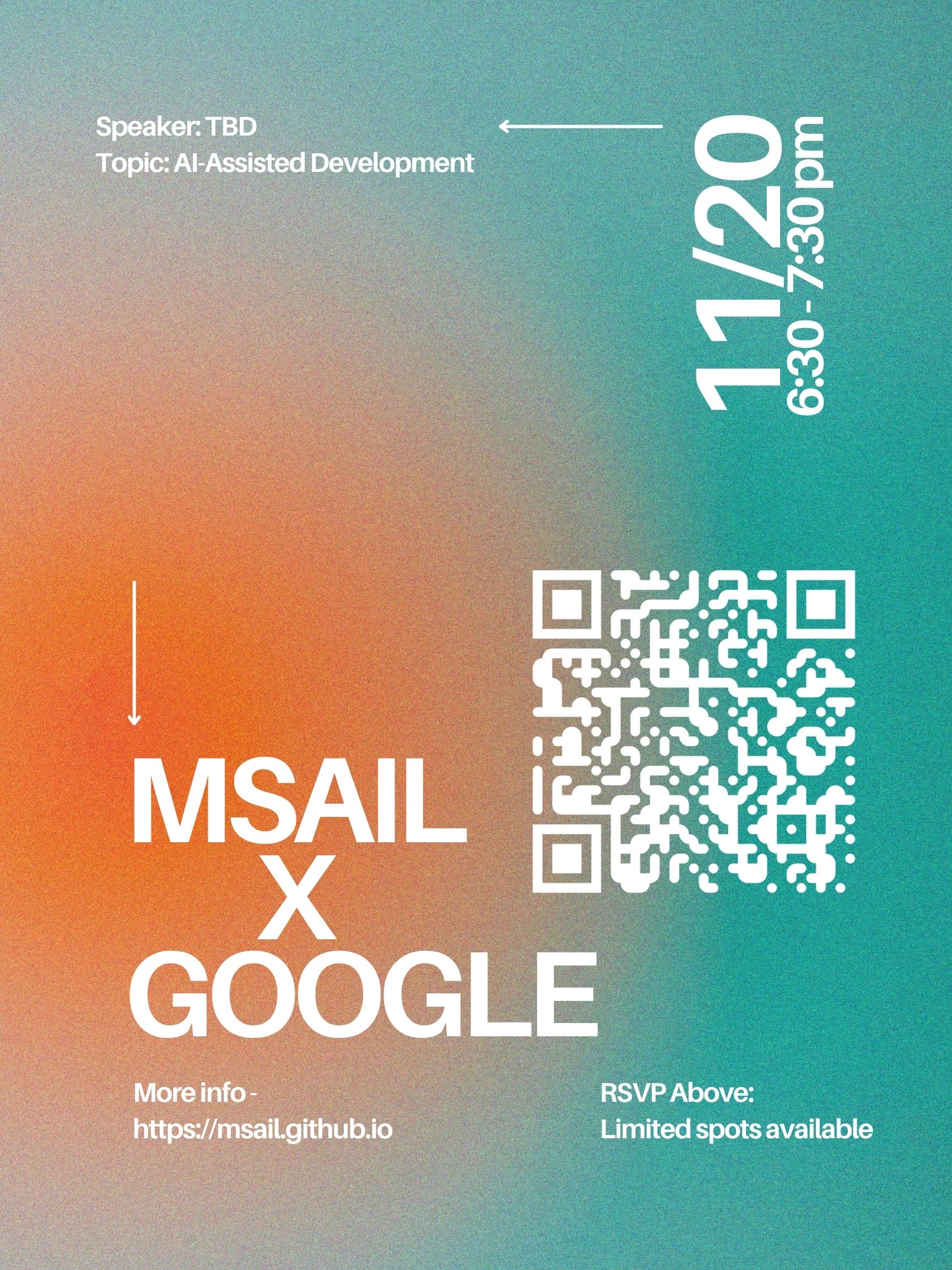

MSAIL x Google

We are excited to announce that MSAIL will be hosting an event in collaboration with Google and the UM Artificial Intelligence Laboratory! Join us on Thursday, November 20, from 6:30pm - 7:30pm EST for a discussion on AI-Assisted Development and Coding, featuring Google representatives who will share insights into how AI is transforming the software development process and what the future of AI-powered tools looks like.

There are limited spots available, so make sure to RSVP as soon as possible!

Molly Welch is a Partner at Radical Ventures, based in San Francisco. Molly invests in AI-native startups at the early-stage, including infrastructure and application AI startups. She has worked with Radical portfolio companies Datology, Muon Space, P-1, Solver, Spara, World Labs, and others not yet public.

Prior to Radical, Molly worked on the Google Brain team, collaborating with research leadership on commercial initiatives and special projects. During graduate school, she also worked on AI policy at Lyft and as a venture investor at frontier tech VC Playground Global.

Molly holds a B.A. with distinction from Stanford University, a Masters in Public Policy from the Harvard Kennedy School, and an MBA with distinction from Harvard Business School.

Who: Molly Welch

When: Tuesday September 23, 6:30pm EST

RSVP for this event!